“Alexa, book me a facial.” Simple? Yes. Compliant? Not always.

As voice assistants like Alexa, Google Assistant, and Siri become the new gatekeepers for service-based bookings, local businesses are entering a high-conversion frontier—but also a legal minefield. This post explores the privacy and regulatory traps lurking in voice commerce for local providers like medspas, law firms, and plumbers—and how to protect yourself without sacrificing UX.

Why Local Businesses Are Rushing Into Voice Commerce

Voice-first interactions are gaining massive traction, especially among:

- Medspas offering Botox or laser consultations

- Plumbers handling emergency calls

- Lawyers scheduling free initial consults

Convenience and speed are driving this shift. But are you capturing these bookings ethically—and legally?

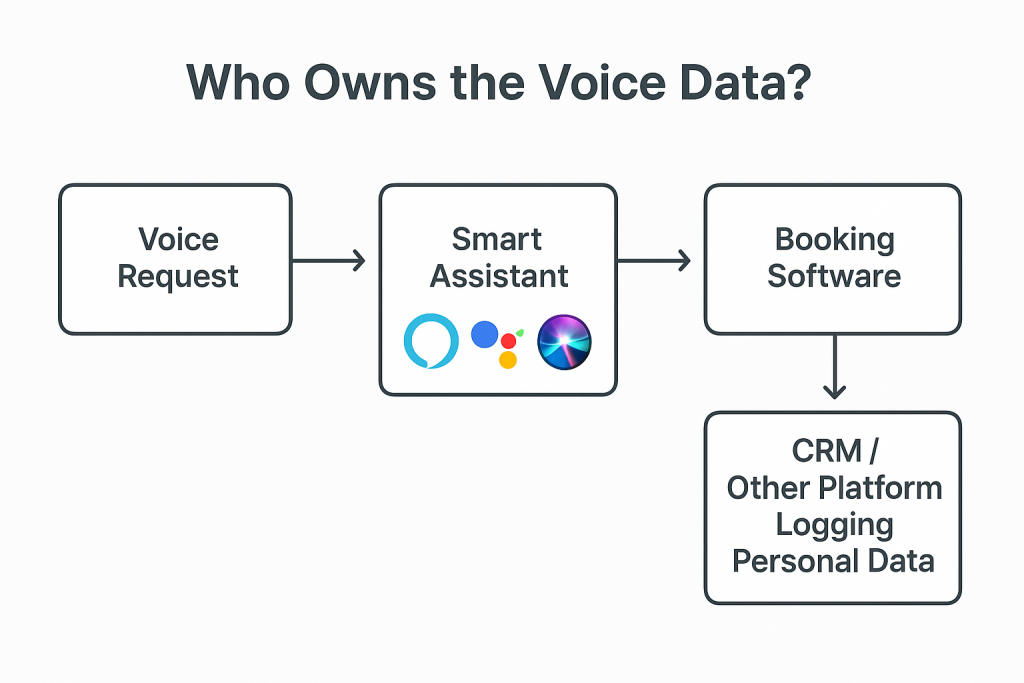

Who Owns the Voice Data?

Big Tech’s Fine Print

- Amazon Alexa: Stores voice recordings unless manually deleted

- Google Assistant: Links voice queries to Google accounts for ad targeting and personalization

- Apple Siri: Claims anonymization, but recordings are still used for processing

This means: even if you don’t store the conversation, they might.

The Booking Data Dilemma

- If your Alexa skill or Google Action routes through Calendly, Square, or a CRM—it might log personal or sensitive data (PII)

- Any HIPAA-regulated information or legal content passed over voice becomes a potential violation

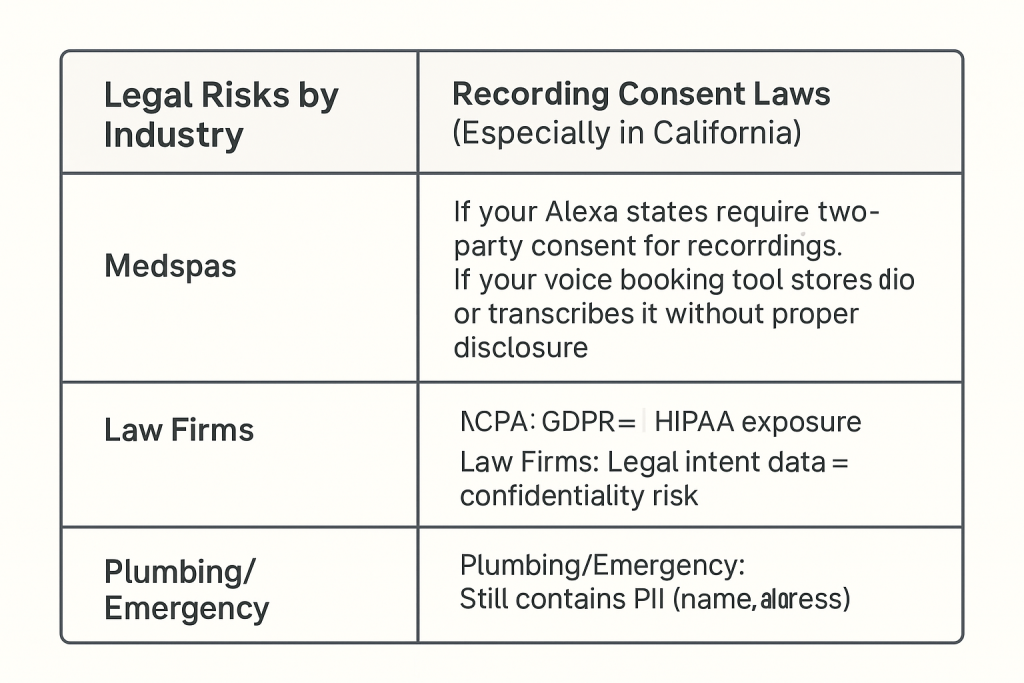

Legal Risks by Industry

Recording Consent Laws (Especially in California)

California and other states require two-party consent for recordings. If your voice booking tool stores audio or transcribes it without proper disclosure, you could be in violation.

CCPA, GDPR, and HIPAA Violations

- Medspas: Medical data = HIPAA exposure

- Law Firms: Legal intent data = confidentiality risk

- Plumbing/Emergency: Still contains PII (name, address, phone)

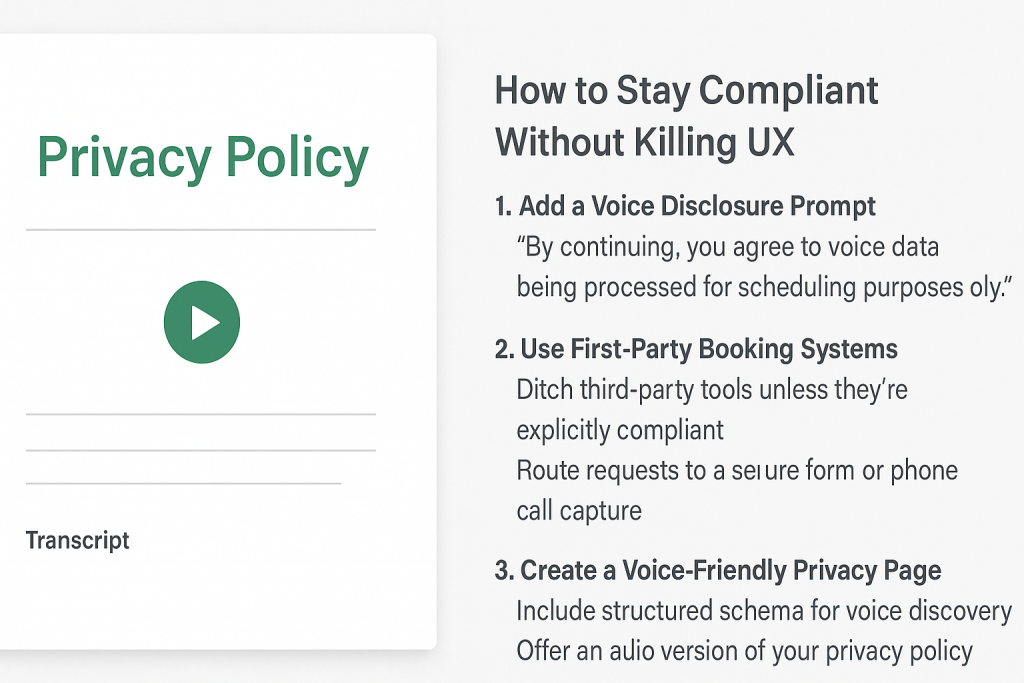

How to Stay Compliant Without Killing UX

1. Add a Voice Disclosure Prompt

“By continuing, you agree to voice data being processed for scheduling purposes only.”

This can be triggered in your voice assistant skill or embedded in your smart display screen.

2. Use First-Party Booking Systems

- Ditch third-party tools unless they’re explicitly compliant

- Route requests to a secure form or phone call capture

3. Create a Voice-Friendly Privacy Page

- Include structured schema for voice discovery

- Offer an audio version of your privacy policy for smart speaker playback

Industry-Specific Recommendations

- Plumbers: Ask only for name and zip code to limit data exposure

- Medspas: Never collect treatment history or medical conditions over voice

- Law Firms: Use voice only to schedule; transfer legal details to secure call or intake form

Conclusion: Don’t Let Voice Commerce Talk You Into Trouble

Voice commerce offers explosive upside for lead generation—but it also exposes local businesses to unseen liability. Data ownership, recording laws, and privacy compliance aren’t optional. They’re essential.

✅ Want to make sure your Alexa or Siri booking flow is compliant? Request a Free Voice Commerce Audit →